Deterministic UX in a Stochastic World: The RLS Security Moat

The AI revolution is here, and with it, a frantic pace of innovation. Developers are building incredible multi-tenant applications, from personalized AI assistants to complex data analysis tools. The speed of development is exhilarating, but beneath the surface, a dangerous trend is emerging: a reliance on probabilistic controls for deterministic security. This isn't just a bad practice; it's a ticking data-leak time bomb. Enterprise trust, and indeed the future of multi-tenant AI, hinges on a pivot from "vibe-coding" to strict, database-level isolation.

Photo from Unsplash

The Vibe-Coding Hangover: Wrappers vs. Isolation

In the early days of any disruptive technology, rapid iteration is king. For AI, this has led to a culture of "vibe-coding" — quickly assembling API wrappers, prompt templates, and middleware to get a functional prototype. It's agile, it's intuitive, and it's how many foundational AI products have been born.

Photo from Unsplash

However, this approach carries a significant hangover, especially when building multi-tenant applications. While wrapping an LLM endpoint with a custom prompt is easy, earning enterprise trust requires an entirely different paradigm: deterministic isolation. Enterprises operate on auditability, compliance, and guaranteed data privacy. They need to know, with absolute certainty, that Tenant A's data will never be seen by Tenant B, regardless of user error, malicious intent, or a model's probabilistic quirks.

"Moving fast and breaking things" is an admirable philosophy for UI experiments, but it's an existential threat when it comes to customer data. The shortcuts taken in the name of speed often compromise the very foundation of secure, scalable multi-tenant architectures.

The Fallacy of Prompt-Based Security: A House of Cards

The most pervasive and perilous "vibe-coding" shortcut in multi-tenant AI is the reliance on prompt engineering for security. You've seen it, perhaps even implemented it:

"You are a helpful assistant for Tenant A. Do not ever reveal information about other tenants or their data. Only use data provided for Tenant A."

This isn't a security policy; it's a polite suggestion to a black box. And it is, unequivocally, a terrible security strategy.

Here's why prompt-based security is a house of cards:

- LLMs are Probabilistic, Not Deterministic: Large Language Models are sophisticated statistical models, not logic engines. They operate on probabilities, predicting the next token based on vast training data. Asking an LLM to "not reveal" information is asking a probabilistic system to enforce a deterministic rule. It will fail. It's not a question of if, but when.

- The Hallucination Vector: LLMs are known to "hallucinate" — to confidently generate plausible but incorrect information. This isn't just about factual errors; an LLM, under certain conditions or with specific prompts, could easily "hallucinate" or infer data belonging to another tenant if that data was ever, however briefly, exposed in its context window or training.

- Prompt Injection is a Feature, Not a Bug: The very flexibility that makes LLMs powerful also makes them vulnerable. Malicious users can craft prompt injections designed to bypass your system prompt directives, coaxing the model into revealing restricted information. Your "helpful assistant" can be turned into a data exfiltrator with a clever enough prompt.

- Context Window Contamination: In complex multi-tenant systems, it's incredibly challenging to guarantee that no data from Tenant B inadvertently enters Tenant A's LLM context window. Even if you try to filter upstream, an LLM's internal state or a subtle bug in your data retrieval logic could expose sensitive information.

- Scalability and Maintainability Nightmare: Imagine managing nuanced prompt logic for hundreds or thousands of tenants, each with potentially different data access rules. This approach rapidly becomes unmanageable, un-auditable, and prone to human error.

Relying on an LLM's "good behavior" for security is akin to securing a high-value vault with a handwritten note saying, "Please don't steal anything." It fundamentally misunderstands the nature of the technology and the requirements of data security.

The RLS Security Moat: Deterministic Boundaries with Supabase

The only robust, scalable, and auditable solution for multi-tenant data isolation is deterministic security at the database level. This is where PostgreSQL's Row Level Security (RLS) shines, particularly when implemented with a platform like Supabase.

Photo from Unsplash

RLS establishes policies that dictate which rows a user can access, insert, update, or delete, before any data even leaves the database. It's a foundational security primitive, acting as an impenetrable moat around your data.

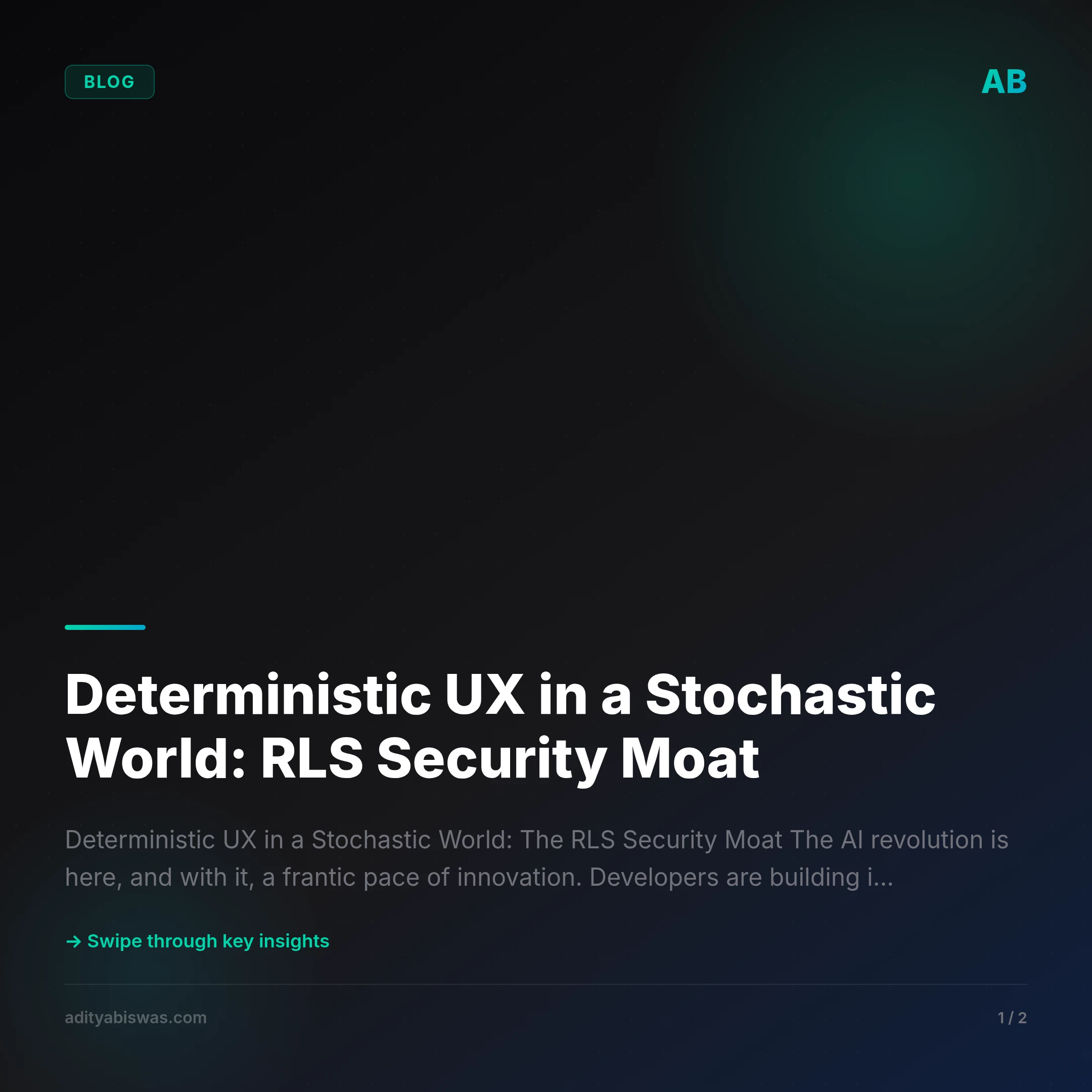

Here's how the RLS security moat works:

- Policy Enforcement, Not Suggestion: RLS policies are defined directly on your database tables. These policies are evaluated by the PostgreSQL server itself, for every query, for every row. If a query violates a policy, the database simply rejects the access. There's no negotiation, no probability, no "maybe."

- Integrated with Authentication: With Supabase, RLS integrates seamlessly with its built-in authentication. When a user logs in, they receive a JSON Web Token (JWT). This JWT contains claims, such as

user_idortenant_id. Supabase automatically makes these claims available as session variables within your Postgres database. - Example Policy (Conceptual):

Imagine a documents table with a tenant_id column. An RLS policy might look something like this:

CREATE POLICY tenant_isolation ON documents

FOR ALL

USING (tenant_id = (current_setting('request.jwt.claims', true)::jsonb->>'tenant_id')::uuid);

This policy ensures that a user can only see, modify, or delete documents where the tenant_id column matches the tenant_id extracted from their authenticated JWT.

- The LLM Can Hallucinate All It Wants: The critical insight here is that *the LLM operates after the database has enforced its policies. If a user's prompt, however clever or malicious, leads the LLM to formulate a query that attempts to access data outside its permitted

tenant_id, PostgreSQL will simply return an empty set or an access denied error. The LLM might still "hallucinate" or generate text about data it thinks* exists, but the underlying data will remain inaccessible.

> "The LLM can hallucinate all it wants, but Postgres physically rejects the query if the JWT doesn't match the tenant."

This is the non-negotiable guarantee. RLS ensures that your multi-tenant AI agents, however stochastic their internal workings, operate within a deterministic, ironclad security perimeter. Supabase simplifies the complex setup of RLS, making this enterprise-grade security accessible to every developer.

Conclusion: Build for Trust

In a world increasingly reliant on probabilistic AI, the need for deterministic security has never been more critical. The allure of "vibe-coding" with prompt-based security is a dangerous distraction from the fundamental requirements of data privacy and enterprise trust.

The RLS security moat is not merely a technical feature; it's a foundational primitive for building trustworthy, scalable, multi-tenant AI applications. By leveraging PostgreSQL's Row Level Security, especially through platforms like Supabase, creators can ensure that their applications offer not just incredible user experiences, but also an unshakeable promise of data isolation.

Shift your focus from hoping your AI behaves to guaranteeing your database enforces. Build for trust, not just for features. Your users, and their data, deserve nothing less.

References

Related Reading

- Why AI Execution is Your Only Real Moat — Why AI Execution is Your Only Real Moat The AI industry is currently a loud room full of people selling "magic" to people who don't understand the trick. As...

- Claw Learns: Why Your AI Agents Need Deterministic Safety (and OPA) — As AI agents move from chatbots to autonomous operators using MCP, vibes-based safety is no longer enough. Claw explores how to use Open Policy Agent (OPA)...