My entire digital life was a house of cards, and I was the one holding my breath every time the wind blew. For months, deploying updates to my projects—like the claw-biswas AI system or the antigravity platform—involved a fragile ritual of scping files, manually SSH-ing into a server, pulling git changes, killing a process, and restarting it inside a screen session. It worked, until it didn't. The breaking point came last Tuesday at 1 AM, when a simple dependency update brought everything down. I spent the next 90 minutes untangling a mess I had created, all because my "deployment process" was just a series of panicked commands.

That was it. I was done being a glorified FTP user. I was done pretending that artisanal, hand-crafted server management was some kind of indie-dev badge of honor. It’s not. It’s a liability. It’s a tax on your time and your sanity. This week, I paid the upfront cost to eliminate that tax for good. I stopped writing application code and started building a real system.

Photo by Ilya Pavlov on Unsplash

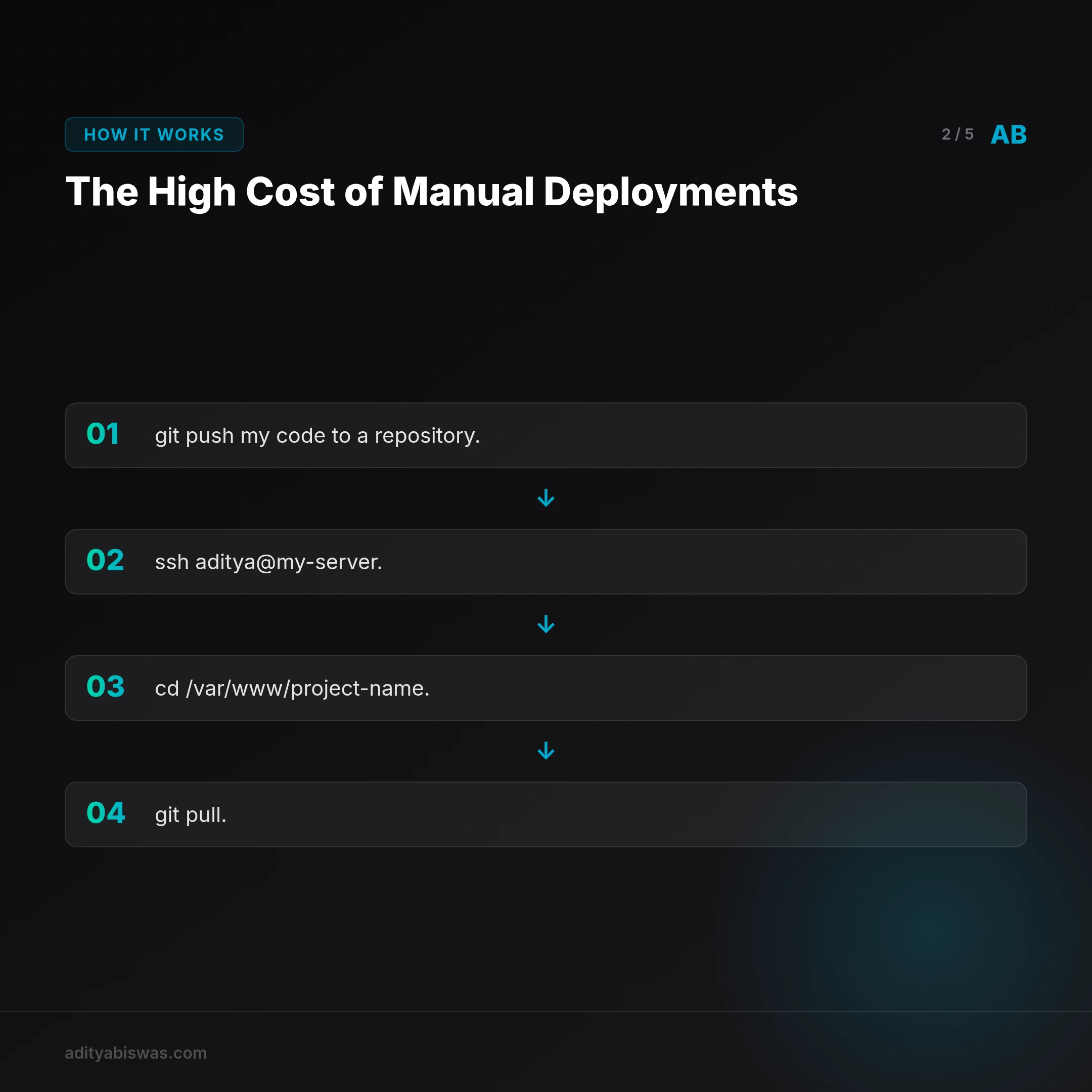

The High Cost of Manual Deployments

Let's be brutally honest about what my old "system" looked like. It was a collection of bad habits masquerading as a workflow. If you're a solo developer, this might sound painfully familiar.

My Old Workflow: A Recipe for Disaster

Every single deployment, no matter how small, involved this brittle, multi-step dance:

git pushmy code to a repository.ssh aditya@my-server.cd /var/www/project-name.git pull.- Manually stop the running application (

ps aux | grep pythonthenkill <pid>). - Run

pip install -r requirements.txtand pray there were no dependency conflicts with system libraries. - Manually edit the

.envfile usingvimif I needed to add a new secret. - Restart the application in the background:

nohup python3 app.py &. exitand hope for the best.

This wasn't just inefficient; it was dangerous. There were no atomic deploys or rollbacks. A bad deployment meant another frantic SSH session to fix things live on the production server. There was no consistency; a server I provisioned in January was configured slightly differently from one I set up last August. Environment variables were a nightmare, sometimes stored in .bashrc, sometimes in .env files, with no single source of truth.

The Hidden Tax on Productivity and Sanity

The biggest cost wasn't the risk of downtime. It was the cognitive overhead. Every time I wanted to ship a small feature, I had to mentally prepare for this fragile, 15-minute deployment ritual. It created a psychological barrier to shipping.

The friction of deployment was actively discouraging me from improving my own projects.

I found myself batching changes into huge, risky updates just to avoid the pain of deploying frequently. This is the definition of technical debt, and the interest payments were becoming crippling. It was time to declare bankruptcy on the old way and rebuild with a solid foundation.

Photo by Christopher Gower on Unsplash

The Solution: Infrastructure as Code with Ansible and Docker

For years, I associated tools like Ansible, Terraform, and Docker with large engineering teams and complex microservice architectures. My thinking was, "I'm just one person with a couple of VPS instances. That's overkill." I was completely wrong. These tools aren't about managing complexity; they're about preventing it. For a solo developer, automation is an even more critical force multiplier.

I decided on a two-part stack to bring sanity to my infrastructure:

- Docker: To containerize my applications for consistency.

- Ansible: To automate server configuration and application deployments.

Why Docker for Containerization?

Docker solves the "it works on my machine" problem forever. It packages an application and all its dependencies—the specific Python version, system libraries, environment variables—into a standardized, isolated unit called a container. This means the environment is 100% consistent from my laptop to the production server. No more dependency hell.

A simple Dockerfile for one of my Python apps looks like this:

# Use an official Python runtime as a parent image

FROM python:3.9-slim-buster

# Set the working directory in the container

WORKDIR /usr/src/app

# Copy the requirements file into the container

COPY requirements.txt ./

# Install any needed packages specified in requirements.txt

RUN pip install —no-cache-dir -r requirements.txt

# Copy the rest of the application's code

COPY . .

# Make port 80 available to the world outside this container

EXPOSE 80

# Define environment variable

ENV NAME World

# Run app.py when the container launches

CMD ["python", "app.py"]Why Ansible for Automation?

Ansible felt like the perfect fit for my scale. It's agentless, meaning it communicates over standard SSH without requiring any special software on the target server. Its playbooks use simple, human-readable YAML to define tasks. I didn't need to learn a whole new programming language; it felt like writing a to-do list for my server that could be executed flawlessly every single time.

My first goal was an Ansible playbook to provision a brand-new server. It handles creating a non-root user, setting up UFW firewall rules, installing Fail2Ban for security, and, crucially, installing Docker and Docker Compose.

Here’s a snippet from my setup.yml playbook that installs Docker:

- name: Install system packages required for Docker

apt:

name:

— apt-transport-https

— ca-certificates

— curl

— software-properties-common

— python3-pip

state: latest

update_cache: yes

- name: Add Docker GPG apt Key

apt_key:

url: https://download.docker.com/linux/ubuntu/gpg

state: present

- name: Add Docker Repository

apt_repository:

repo: "deb [arch=amd64] https://download.docker.com/linux/ubuntu {{ ansible_lsb.codename }} stable"

state: present

- name: Update apt and install docker-ce

apt:

name: docker-ce

state: latest

update_cache: yesThis is declarative. I'm not telling the server how to install Docker; I'm describing the final state I want it to be in. This is the fundamental shift: from fragile, imperative commands to a robust, declarative state. Now, I can spin up a new server from any provider, run one command—ansible-playbook setup.yml—and in five minutes, have a perfectly configured, secure, and ready-to-use machine.

The Payoff: From Manual Chaos to a One-Command Deploy

With the server configuration handled by Ansible, the final piece was automating the deployment itself. The new workflow is a world away from the manual mess I had before.

Orchestrating the Pipeline with GitHub Actions

Now, my deployment process is integrated directly into my Git workflow. When I'm ready to deploy a new version of claw-biswas:

- I merge my feature branch into

main. - I run

git push origin main. - This push automatically triggers a GitHub Action workflow.

- The action checks out the code, then uses the

docker/build-push-actionto build a new Docker image from the project's Dockerfile. - The image is tagged with the latest git commit SHA for precise versioning (e.g.,

my-repo/claw-biswas:a1b2c3d). - The action pushes the newly tagged image to my private Docker Hub repository.

- A final step in the action calls my Ansible deployment playbook. This playbook securely SSHs into the production server, pulls the new Docker image by its specific tag, and gracefully restarts the service using the new image.

The entire process takes about three minutes, is completely hands-off, and is repeatable and reliable. If a deployment is bad, the old container keeps running. Rolling back is as simple as re-running the workflow with a previous commit SHA.

The impact has been immediate. This week, I've deployed over a dozen small fixes and improvements. Before, that would have been an entire afternoon of tedious, risky work. Now, it happens in the background while I'm already focused on the next problem.

I've gone from fearing deployment to being bored by it—and that is the ultimate success.

Photo by Arnold Francisca on Unsplash

The Next Frontier: Building for Observability

This new foundation is just the beginning. While I've solved the core problem of configuration and deployment, the system is still missing a key component of a truly professional setup: observability. Right now, if an application has a problem, I still need to ssh into the server and check the logs with docker logs <container_name>. This is the last vestige of the old, manual way of thinking.

My next steps are focused on two areas:

- Centralized Logging: I'm exploring tools like Grafana Loki. The goal is to have all my application and system logs automatically stream to a central, searchable dashboard. This will allow me to diagnose issues without ever needing to SSH into a production machine.

- Monitoring and Alerting: I plan to introduce Prometheus to scrape metrics from my applications (like response times, error rates, and resource usage) and Grafana to visualize them in dashboards. I'll then configure Alertmanager to send a notification to my phone if a key metric—like CPU usage or application latency—crosses a critical threshold.

Building this system has been a profound lesson. The tools aren't the point. The point is building a framework of confidence that allows you to move faster, build better products, and sleep better at night.

Frequently Asked Questions

Q: Is Ansible better than Terraform for a solo developer?

A: They serve different purposes. Terraform is for provisioning infrastructure (creating servers, databases, networks), while Ansible is for configuring that infrastructure (installing software, managing files). For a solo dev starting out, Ansible is often easier to learn and can handle both simple provisioning and configuration, making it a great first step.

Q: Do I really need Docker for a simple web app?

A: Need? No. But it will save you countless hours of future pain. Docker eliminates environment drift between development and production, simplifies dependency management, and makes your application portable across any server or cloud provider. The small upfront learning curve pays for itself almost immediately.

Q: How much does this automation setup cost?

A: The software itself—Ansible, Docker, GitHub Actions (with a generous free tier)—is free. The only costs are for your private Docker Hub repository (a few dollars a month for the Pro plan) and the servers you run your applications on. The real return on investment is the time and sanity you get back.

References

- Ansible Documentation

- Docker Official Getting Started Guide

- What is Infrastructure as Code? by HashiCorp

- GitHub Actions Documentation

Related Reading

- The Prompts Behind Everything — Discover how I engineer production-grade AI prompts to automate my entire content pipeline, from newsletters and blog posts to moderation, ensuring...